[CompTIA] CV0-004 - Cloud+ Exam Dumps & Study Guide

# Complete Study Guide for the CompTIA Cloud+ (CV0-004) Exam

CompTIA Cloud+ (CV0-004) is the latest version of the intermediate-level certification designed to validate the knowledge and skills of IT professionals in deploying, managing, and maintaining secure cloud solutions across diverse environments. Whether you are a cloud engineer, a systems administrator, or a network analyst, this certification proves your ability to handle the challenges of modern cloud operations.

## Why Pursue the CompTIA Cloud+ Certification?

In an era of increasing cloud adoption, organizations need highly skilled professionals to manage and protect their cloud infrastructures. Earning the Cloud+ badge demonstrates that you:

- Can deploy and manage secure cloud solutions across diverse environments.

- Understand the technical aspects of cloud operations and how to apply them to identify and resolve issues.

- Can analyze security risks and develop mitigation strategies for cloud workloads.

- Understand the legal and regulatory requirements for data security and privacy in the cloud.

- Can provide technical guidance on cloud-related projects.

## Exam Overview

The CompTIA Cloud+ (CV0-004) exam consists of multiple-choice and performance-based questions. You are given 90 minutes to complete the exam, and the passing score is typically 750 out of 900.

### Key Domains Covered:

1. **Security (20%):** This domain focuses on your ability to implement security controls for cloud solutions. You'll need to understand network security, endpoint security, and application security.

2. **Deployment (23%):** Here, the focus is on your knowledge of cloud deployment techniques and tools. You must know how to install and configure cloud resources.

3. **Operations and Support (22%):** This section covers your ability to monitor and manage cloud solution performance. You must understand cloud monitoring tools and how to troubleshooting cloud-related issues.

4. **Cloud Architecture and Design (13%):** This domain focuses on your ability to design secure and scalable cloud architectures. You'll need to understand different cloud models (IaaS, PaaS, SaaS) and how to design for high availability and reliability.

5. **Troubleshooting (22%):** This domain tests your ability to troubleshoot cloud-related issues. You must be proficient with various troubleshooting tools and techniques.

## Top Resources for Cloud+ Preparation

Successfully passing the Cloud+ requires a mix of theoretical knowledge and hands-on experience. Here are some of the best resources:

- **Official CompTIA Training:** CompTIA offers specialized digital and classroom training specifically for the Cloud+ certification.

- **Cloud+ Study Guide:** The official study guide provides a comprehensive overview of all the exam domains.

- **Hands-on Practice:** There is no substitute for building and managing cloud solutions. Set up your own cloud lab and experiment with different cloud architectures and tools.

- **Practice Exams:** High-quality practice questions are essential for understanding the intermediate-level exam format. Many candidates recommend using resources like [notjustexam.com](https://notjustexam.com) for their realistic and challenging exam simulations.

## Critical Topics to Master

To excel in the Cloud+, you should focus your studies on these high-impact areas:

- **Cloud Infrastructure and Management:** Master the nuances of deploying and managing secure cloud solutions across diverse environments.

- **Security in the Cloud:** Know how to implement security controls for cloud solutions, including firewalls and intrusion detection systems.

- **Cloud Operations and Monitoring:** Understand cloud monitoring tools and how to manage cloud solution performance.

- **Troubleshooting Cloud Issues:** Master the principles of troubleshooting cloud-related issues and how to resolve them using various tools and techniques.

- **Cloud Governance and Compliance:** Understand the legal and regulatory requirements for data security and privacy in the cloud.

## Exam Day Strategy

1. **Pace Yourself:** With 90 minutes for the exam, you have about 1 minute per question. If a question is too complex, flag it and move on.

2. **Read the Scenarios Carefully:** Intermediate-level questions are often scenario-based. Pay attention to keywords like "most likely," "least likely," and "best way."

3. **Use the Process of Elimination:** If you aren't sure of the right choice, eliminating the wrong ones significantly increases your chances.

## Conclusion

The CompTIA Cloud+ (CV0-004) is a significant investment in your career. It requires dedication and a deep understanding of cloud principles and technical skills. By following a structured study plan, leveraging high-quality practice exams from [notjustexam.com](https://notjustexam.com), and gaining hands-on experience, you can master the complexities of cloud operations and join the elite group of certified cloud professionals.

Free [CompTIA] CV0-004 - Cloud+ Practice Questions Preview

-

Question 1

A software engineer needs to transfer data over the internet using programmatic access while also being able to query the data. Which of the following will best help the engineer to complete this task?

- A. SQL

- B. Web sockets

- C. RPC

- D. GraphQL

Correct Answer:

D

Explanation:

I agree with the suggested answer D. GraphQL. While some community members favor WebSockets for real-time capabilities, the specific requirement to query the data during programmatic transfer over the internet is the defining feature of GraphQL, which was designed specifically as a query language for APIs.

Reason

GraphQL is the best fit because it provides a complete and understandable description of the data in your API, giving clients the power to query exactly what they need and nothing more. It allows for programmatic access over standard web protocols (HTTP) and is specifically designed to aggregate data from multiple sources through a single endpoint using a structured query syntax.

Why the other options are not as suitable

- Option A is incorrect because SQL (Structured Query Language) is used for managing and querying data within a relational database management system, but it is not a standard protocol for transferring data over the internet via programmatic access; it typically requires a database driver and is rarely exposed directly to the public internet for security reasons.

- Option B is incorrect because WebSockets provide a full-duplex communication channel over a single TCP connection for real-time data streaming, but they do not inherently provide a standardized language or mechanism for querying specific data subsets like GraphQL does.

- Option C is incorrect because RPC (Remote Procedure Call) focuses on executing a function or subroutine on a remote server as if it were local; while it allows programmatic access, it is a functional call model rather than a data-querying model.

Citations

-

Question 2

Which of the following is a field of computer science that enables computers to identify and understand objects and people in images and videos?

- A. Image reconstruction

- B. Facial recognition

- C. Natural language processing

- D. Computer vision

Correct Answer:

D

Explanation:

I agree with the community choice of D. Computer vision. It is the comprehensive field that encompasses the technology required for machines to interpret and process visual data, including identifying objects and people.

Reason

Computer vision is the correct answer because it is the broader scientific field dedicated to digital systems extracting high-level understanding from digital images or videos. It utilizes Machine Learning and Deep Learning models to automate tasks that the human visual system can do, such as object detection and facial identification.

Why the other options are not as suitable

- Option A is incorrect because Image reconstruction refers to the specific process of recreating an image from incomplete or noisy data, such as in medical CT scans, rather than the high-level understanding of objects within them.

- Option B is incorrect because Facial recognition is a specific application or sub-set of Computer vision, not the entire field itself; the question asks for the broad field that covers both objects and people.

- Option C is incorrect because Natural language processing (NLP) is a field of AI focused on the interaction between computers and human language (text/speech), not visual data.

Citations

-

Question 3

A company needs to deploy its own code directly in the cloud without provisioning additional infrastructure. Which of the following is the best cloud service model for the company to use?

- A. PaaS

- B. SaaS

- C. IaaS

- D. XaaS

Correct Answer:

A

Explanation:

I agree with the chosen answer A (PaaS). In the context of the CV0-004 exam, the requirement to deploy custom code while abstracting away the management and provisioning of the underlying infrastructure is the defining characteristic of Platform as a Service.

Reason

Option A is correct because PaaS provides a pre-configured environment including the operating system, middleware, and runtime. This allows developers to upload and execute their own applications (deploying code) without the administrative burden of configuring virtual machines, storage, or networking (provisioning infrastructure).

Why the other options are not as suitable

- Option B is incorrect because SaaS provides a complete, ready-to-use software application managed by a vendor; it does not typically allow a company to deploy and run their own custom-developed source code.

- Option C is incorrect because IaaS requires the user to provision and manage the virtual machines, operating systems, and storage themselves, which contradicts the requirement to avoid provisioning infrastructure.

- Option D is incorrect because XaaS is a generalized, umbrella term for all cloud services and is not a specific technical service model used to define code deployment environments.

Citations

-

Question 4

A company just learned that the data in its object storage was accessed by an unauthorized party. Which of the following should the company have done to make the data unusable?

- A. The company should have switched from object storage to file storage.

- B. The company should have hashed the data.

- C. The company should have changed the file access permissions.

- D. The company should have encrypted the data at rest.

Correct Answer:

D

Explanation:

I agree with the community and the suggested answer of Option D. The key phrase in the question is make the data unusable; encryption is the primary security control designed to ensure that if data is exfiltrated or accessed by an unauthorized party, it remains unreadable and useless without the corresponding decryption key.

Reason

Option D is correct because encryption at rest protects data stored on persistent media (like object storage) by converting it into ciphertext. Even if an attacker bypasses access controls and retrieves the physical or logical data objects, the information cannot be understood or utilized without the cryptographic keys, directly satisfying the requirement to make the data unusable.

Why the other options are not as suitable

- Option A is incorrect because switching from object storage to file storage changes the architecture of how data is organized but does not inherently protect the data content from being read once accessed.

- Option B is incorrect because hashing is a one-way cryptographic function used for integrity verification and password storage; it is not a reversible method for securing entire datasets while keeping them functional for legitimate use.

- Option C is incorrect because file access permissions (ACLs) are a preventative control designed to stop unauthorized access from occurring; however, the question specifies that the party has already accessed the data, meaning these permissions were either bypassed or misconfigured, and they do not make the data itself unusable once it is in the attacker's possession.

Citations

-

Question 5

A customer relationship management application, which is hosted in a public cloud IaaS network, is vulnerable to a remote command execution vulnerability. Which of the following is the best solution for the security engineer to implement to prevent the application from being exploited by basic attacks?

- A. IPS

- B. ACL

- C. DLP

- D. WAF

Correct Answer:

D

Explanation:

I agree with the suggested answer D. While multiple security layers provide defense-in-depth, a Web Application Firewall (WAF) is the most specific and effective solution for protecting a Customer Relationship Management (CRM) application against application-layer exploits like Remote Command Execution (RCE).

Reason

Option D is correct because a WAF operates at Layer 7 (the Application Layer) of the OSI model. It is specifically designed to inspect HTTP/HTTPS traffic for malicious patterns, such as those used in RCE, SQL Injection, and XSS. Since the CRM is a web-based application, the WAF can filter out 'basic attacks' by identifying and blocking known exploit signatures before they reach the vulnerable code.

Why the other options are not as suitable

- Option A is incorrect because an IPS (Intrusion Prevention System) typically focuses on network-wide protocol anomalies and signature-based threats at Layer 3 and 4. While some modern 'Next-Gen' IPS have application awareness, a WAF is a superior 'best solution' for web-specific vulnerabilities.

- Option B is incorrect because an ACL (Access Control List) is a static filter that permits or denies traffic based on IP addresses, ports, and protocols; it cannot inspect the payload of a packet to stop a command execution attack.

- Option C is incorrect because DLP (Data Loss Prevention) is designed to prevent sensitive data from leaving the network (exfiltration), not to prevent inbound exploitation of an application vulnerability.

Citations

-

Question 6

Which of the following is a difference between a SAN and a NAS?

- A. A SAN works only with fiber-based networks.

- B. A SAN works with any Ethernet-based network.

- C. A NAS uses a faster protocol than a SAN.

- D. A NAS uses a slower protocol than a SAN.

Correct Answer:

D

Explanation:

I agree with the community consensus for Option D. SAN is designed for high-throughput, low-latency block-level access, whereas NAS typically uses file-level protocols that carry more overhead and are constrained by standard network traffic.

Reason

Option D is correct because SAN (Storage Area Network) utilizes high-performance block-level protocols like Fibre Channel (FC) or iSCSI, which allow for direct disk access with minimal overhead. In contrast, NAS (Network Attached Storage) uses file-level protocols such as NFS or SMB, which require more processing (file system encapsulation) and generally operate at slower speeds over shared LAN resources.

Why the other options are not as suitable

- Option A is incorrect because a SAN can also operate over Ethernet using protocols like iSCSI or FCoE (Fibre Channel over Ethernet); it is not limited strictly to fiber-optic cabling.

- Option B is incorrect because while a SAN can work on Ethernet, it does not work with 'any' Ethernet network without the proper protocol support and configuration (like jumbo frames or dedicated VLANs), and many high-end SANs still use dedicated Fibre Channel fabrics.

- Option C is incorrect because NAS protocols like CIFS/SMB and NFS are consistently slower than block-level protocols due to the additional file-system layer processing required by the NAS head.

Citations

-

Question 7

A cloud engineer is troubleshooting an application that consumes multiple third-party REST APIs. The application is randomly experiencing high latency. Which of the following would best help determine the source of the latency?

- A. Configuring centralized logging to analyze HTTP requests

- B. Running a flow log on the network to analyze the packets

- C. Configuring an API gateway to track all incoming requests

- D. Enabling tracing to detect HTTP response times and codes

Correct Answer:

D

Explanation:

I agree with the community and the suggested answer of D. In a cloud environment, particularly when dealing with microservices or external integrations, distributed tracing is the standard method for identifying performance bottlenecks across service boundaries.

Reason

Option D is correct because tracing (such as AWS X-Ray, Google Cloud Trace, or Azure Monitor) provides a detailed timeline of a request as it travels through various components. It specifically measures the time spent waiting for a third-party REST API response, allowing the engineer to see exactly which external call is causing the latency and what HTTP status codes are being returned.

Why the other options are not as suitable

- Option A is incorrect because while centralized logging provides data, it often lacks the precise timing correlation and visual waterfall view required to pinpoint intermittent latency between specific service hops.

- Option B is incorrect because flow logs operate at Layer 4 of the OSI model, showing IP addresses and ports; they do not provide HTTP-level visibility into API response times or application logic.

- Option C is incorrect because an API gateway typically manages incoming requests to your own services; while some can log outbound traffic, they are not primarily designed to trace the internal application logic and external dependency latency in the same way a tracing tool does.

Citations

-

Question 8

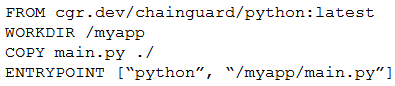

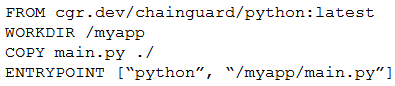

A cloud engineer is reviewing the following Dockerfile to deploy a Python web application:

Which of the following changes should the engineer make to the file to improve container security?

- A. Add the instruction USER nonroot.

- B. Change the version from latest to 3.11.

- C. Remove the ENTRYPOINT instruction.

- D. Ensure myapp/main/py is owned by root.

Correct Answer:

A

Explanation:

I agree with the suggested answer A. In container security, the principle of least privilege is paramount. Running a container as the root user (the default) poses a significant risk because if the application is compromised, the attacker gains administrative control over the container and potentially the host. Adding USER nonroot mitigates this risk.

Reason

Option A is correct because specifying USER nonroot ensures the application process runs with restricted permissions. This prevents an attacker from performing administrative tasks, installing malicious packages, or modifying system-level files within the container if they exploit a vulnerability in the Python web application.

Why the other options are not as suitable

- Option B is incorrect because while using a specific version like 3.11 instead of latest improves immutability and build consistency, it is a configuration best practice rather than a direct mitigation for runtime security vulnerabilities compared to the risk of running as root.

- Option C is incorrect because removing ENTRYPOINT would break the container's ability to start the application automatically; it does not enhance security.

- Option D is incorrect because ensuring files are owned by root while the process runs as root provides no security boundary; in fact, the goal is typically to ensure the application user has only the minimum necessary permissions (read/execute) rather than ownership by the superuser.

Citations

-

Question 9

A group of cloud administrators frequently uses the same deployment template to recreate a cloud-based development environment. The administrators are unable to go back and review the history of changes they have made to the template. Which of the following cloud resource deployment concepts should the administrator start using?

- A. Drift detection

- B. Repeatability

- C. Documentation

- D. Versioning

Correct Answer:

D

Explanation:

I agree with the community and the suggested answer of Versioning. The core problem described is the inability to review the history of changes made to a template, which is the primary function of version control systems in cloud orchestration.

Reason

Versioning (Option D) is the correct practice for tracking modifications to Infrastructure as Code (IaC) or deployment templates over time. It provides a historical audit trail, allowing administrators to see who changed what and when, and facilitates the ability to roll back to a known-good state if a new change introduces errors.

Why the other options are not as suitable

- Option A is incorrect because Drift detection is used to identify discrepancies between the defined state in a template and the actual state of live resources in the cloud; it does not track historical changes to the template file itself.

- Option B is incorrect because Repeatability is a goal achieved by using templates (ensuring the environment is the same every time it is deployed), but it does not inherently provide a mechanism for change history.

- Option C is incorrect because while Documentation might describe the purpose of a template, it is a manual process that lacks the automated change tracking, diffing, and rollback capabilities provided by a version control system.

Citations

-

Question 10

A government agency in the public sector is considering a migration from on premises to the cloud. Which of the following are the most important considerations for this cloud migration? (Choose two.)

- A. Compliance

- B. IaaS vs. SaaS

- C. Firewall capabilities

- D. Regulatory

- E. Implementation timeline

- F. Service availability

Correct Answer:

AD

Explanation:

I agree with the community and suggested answer of AD. For public sector and government entities, the legal frameworks governing data handling are the primary blockers and prerequisites for any cloud adoption.

Reason

Compliance (Option A) is critical because government agencies must verify that cloud service providers (CSPs) meet specific standards like FedRAMP, CJIS, or HIPAA before any data migration can occur. Regulatory (Option D) considerations are equally vital as they encompass the legal mandates regarding data residency (where data is physically stored), data sovereignty, and specific public sector laws that dictate how government information must be managed and protected.

Why the other options are not as suitable

- Option B is incorrect because while choosing between IaaS and SaaS is a technical and operational decision, it is secondary to whether the platform is legally allowed to host government data.

- Option C is incorrect because firewall capabilities are specific technical controls that are part of a broader security architecture, rather than a primary business-level migration consideration for the public sector.

- Option E is incorrect because the implementation timeline is a project management factor; while important for planning, it does not carry the same weight as legal and safety mandates in a government context. Option F is incorrect because service availability (SLAs) is a standard requirement for all cloud customers, whereas compliance and regulatory needs are uniquely heightened and restrictive for government agencies.

Citations